Training scripts often look deceptively small. A few dozen lines of parameters can hide weeks of decisions.

This project is a good example. The core training call is compact, but behind it sits a full stack of assumptions about transfer learning, augmentation, optimization, hardware stability, and diminishing returns.

Start with Transfer Learning, Not Heroics

The project uses pretrained YOLOv11 weights instead of starting from random initialization.

That single line carries most of the project's leverage.

Transfer learning works because the early and middle layers of modern vision models already know how to recognize useful patterns like edges, corners, textures, and shapes. The custom training phase does not need to rediscover vision from first principles. It only needs to adapt those learned features to MTG card regions.

Without transfer learning, a small custom dataset would force the model to spend precious capacity learning generic image structure instead of task-specific geometry.

Why YOLOv11n?

A common mistake in ML projects is to jump to larger models too early. This repo does the opposite. It starts with the smallest practical model and validates the ceiling before scaling up.

That is defensible for three reasons:

- The task has only seven classes.

- The dataset is limited.

- Fast iteration is worth more than theoretical capacity you cannot fully exploit.

The experiment logs confirm the intuition. Larger or higher-resolution runs did not reliably dominate the best nano baseline.

The Core Training Shape

The local training setup is clear:

That tells us a lot:

- finite epoch budget

- early stopping as a safety valve

- AdamW over plain SGD

- cosine decay over static learning rate

- CPU training for local stability

Each of these choices has a practical reason.

The Local Hardware Constraint Is Real

The repo explicitly documents why local training uses CPU on Apple Silicon: MPS instability during training in the relevant software stack.

That is exactly the kind of detail glossy ML tutorials omit. But it matters.

If the hardware path with the highest theoretical throughput corrupts training or fails unpredictably, it is the wrong path. Stability matters more. A slower configuration that behaves consistently is better engineering than a faster fragile one.

Augmentation Is How the Model Learns Real Life

The strongest document in the object-detection repo may be docs/training-strategies.md because it ties every augmentation to both research history and project-specific reasoning.

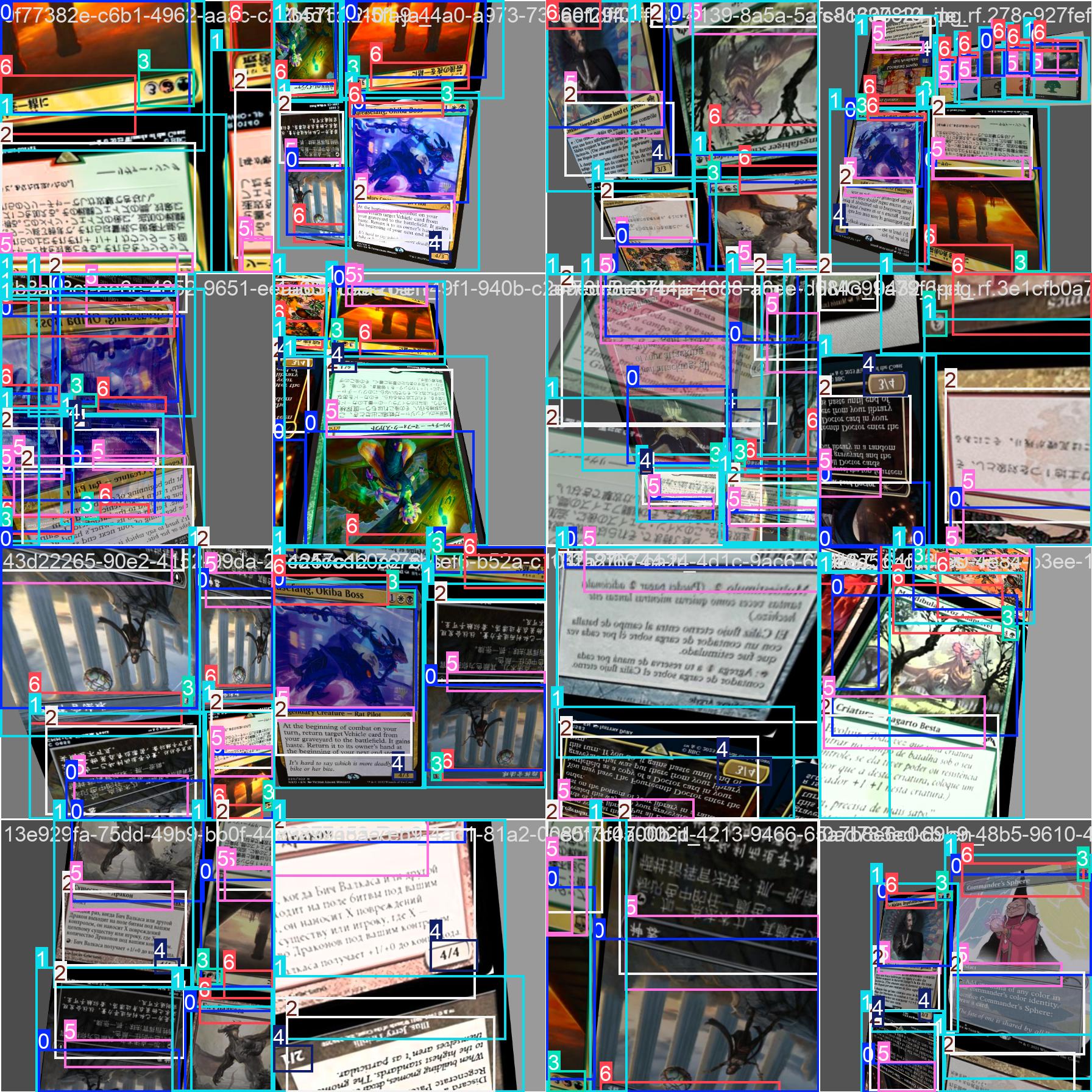

Mosaic

Mosaic combines four images into one composite training sample. In this project, that matters because it creates more scale variety and more small-object situations.

Why it fits this task:

- the dataset is not massive

- small regions like

mana-costandpowerneed more variety - the detector must not overfit background context

Primary reference: YOLOv4 by Bochkovskiy, Wang, and Liao.

Mixup

Mixup blends examples to discourage overconfidence and brittle decision boundaries.

For a card detector, the main practical benefit is not abstract regularization language. It is reducing the chance that the model learns silly correlations like "fingers nearby mean art box here" or "wood grain implies card boundary."

Primary reference: Zhang et al., mixup: Beyond Empirical Risk Minimization.

Multi-Scale Training

The webcam use case guarantees scale variation. Cards will appear close to the camera, far from it, tilted, partially occluded, and inconsistently framed. Multi-scale training is how the model sees that distribution before the user does.

Primary references: SSD and later YOLO practice.

Geometric and Color Augmentation

The repo uses:

- rotation

- perspective

- shear

- HSV variation

- horizontal flip

- random erasing

Together these encode the actual environment of the product:

- handheld cards are not perfectly upright

- lighting is inconsistent

- parts of the card can be covered by fingers

- framing is imperfect

This is what good augmentation looks like. It is not "more transformations." It is targeted simulation of deployment reality.

Optimization Choices

The training document also explains why optimization is not an afterthought.

AdamW

AdamW is used because it tends to behave well on smaller datasets and decouples weight decay from the raw parameter update. That is a practical choice, not a dogmatic one.

Primary reference: Loshchilov and Hutter, Decoupled Weight Decay Regularization.

Cosine Learning Rate Decay

Cosine decay gives the model large corrective steps early and smaller refinement steps later. That shape is useful when fine-tuning pretrained weights because you want fast adaptation without endlessly overshooting once the model has found a good basin.

Primary reference: SGDR by Loshchilov and Hutter.

Early Stopping

This repo uses early stopping with patience. That is not laziness. It is an acknowledgment that the marginal return on extra epochs can become negative once validation quality plateaus.

Primary reference: early stopping theory and common modern practice in vision training.

Cloud Training: Useful, but Not Automatically Better

The repo includes several RunPod-based experiments with larger models and higher resolutions.

That experiment history is valuable because it kills a common assumption: if n is good, then m at 1280 must be better.

Not necessarily.

If annotation quality sets part of the ceiling, larger models can simply memorize noise more efficiently. They also cost more. That combination increases runtime and tuning complexity without improving the production trade-off.

This project's logs point to a sober conclusion: the best balanced result remained the smaller baseline.

The Big Lesson

Training quality here comes from alignment, not excess:

- model capacity aligned with dataset size

- augmentations aligned with deployment reality

- optimizer aligned with fine-tuning behavior

- hardware aligned with stability

- experiments aligned with measurement instead of hype

That is a mature training story.

Conclusion

The best training setups rarely look dramatic. They look coherent.

This repo trains the detector the way strong engineering teams usually win: start with a stable baseline, understand the data, justify every augmentation, and let results kill your assumptions.

In the next article, we will read those results properly. Metrics only help when you can interpret them. Otherwise they are just decoration.

Further Reading

- Training strategy deep dive:

docs/training-strategies.md - Parameters reference:

docs/parameters.md - Experiment logs:

docs/training-v2-status.md - Cost analysis:

docs/training-cost-analysis.md - YOLOv4 / Mosaic: https://arxiv.org/abs/2004.10934

- mixup: https://arxiv.org/abs/1710.09412

- Decoupled Weight Decay: https://arxiv.org/abs/1711.05101

- SGDR: https://arxiv.org/abs/1608.03983

- Mixed Precision Training: https://arxiv.org/abs/1710.03740